Remote usability testing is nothing new. For international clients and hard to reach audiences, remote testing has been a valuable testing approach used by many for years.

While the move to a completely virtual existence came as a bit of a shock, like it has for everyone, the transition has been relatively pain free thanks to the existing technology and methods available to all of us in the UX world.

In fact, moving exclusively to remote testing has highlighted some of the advantages of this approach, like the unexpected ethnographic insights we can glean from speaking to people in their homes (and the number of pets that want to get involved in research!).

The one area of usability testing that has been hard to replicate exactly in a remote environment is mobile research.

Why hands are important

When testing on desktop devices, we are able to share screen and mouse control with the tester. This enables us to see both what they are looking at and the action they are taking on the website – in other words, what their mouse pointer is doing. This means there is a logical link between what the tester does and the result we see on the screen.

With remote mobile testing this is not so straightforward. Remote testing platforms, such as Lookback, allow you to test on mobile by installing an app on the participants phone then sharing the screen, mic and forward camera.

The problem with this approach is that you only see the screen, not what action the tester is taking. This means you will see the screen change but not what they did to cause that change e.g. tap on a CTA or in-page link.

Hands, and more specifically what they are doing, are very important when testing on mobile devices. In the lab we use an overhead camera to capture these hand movements, and all the scrolling, tapping and hesitations that they make.

Without hands, you are only seeing part of the story and it can become disorientating for observers as you can’t always follow the tester’s intent and journey through the site.

How to capture mobile testing with hands

We are yet to find a fool-proof solution to this problem; however, we have devised a possible work around.

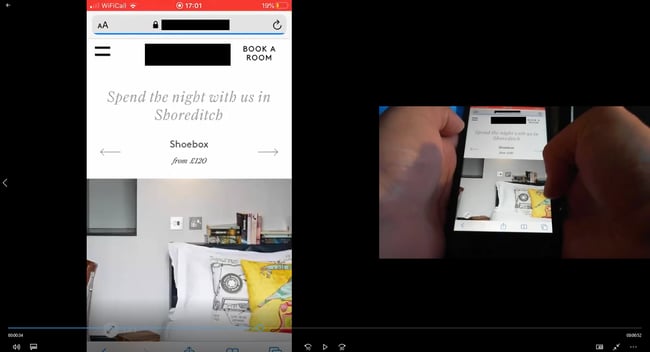

By using a combination of devices (laptop and smartphone), Zoom screen sharing and an additional webcam, we have created the ‘at home mobile testing set up’.

At home mobile testing rig: adapting to the situation to get the best quality insights!

At home mobile testing rig: adapting to the situation to get the best quality insights!

Screen capture: using an additional webcam to capture both the hand movements and the screen.

Screen capture: using an additional webcam to capture both the hand movements and the screen.

It is by no means the easiest set up and requires an element of tech savviness from our testers, so certainly not suitable for all projects and testers. It also requires the tester to have a spare webcam or have one posted to them, which adds an additional wrinkle of complexity.

However, it does give us the missing info – what their hands are doing. For that, we think it is worth the additional small investment in a webcam.

Like everyone, it is a case of trial and error with these new solutions. If anyone out there has developed a similar or better solution, we’d love to hear about it!

Read more: The benefits for remote UX, UX will continue in a self-isolated world